Is the wyze cam accesible locally using just a browser? If so you could use my plugin below to replace the image on the control tab with an iframe loading the camera's web page. Then you're not re-encoding the stream on the pi, which I believe will introduce some delays/latency in the stream.

I've not seen any way to embed an RTSP stream in a browser without rencoding/transcoding into something like mjpeg. If someone is away of a way it would save a lot of trouble in not having to run additional services.

Ideally I'd love to use the Wyze cam for timelapses and Octolapse. For $25 the image quality is pretty comparable to something like a C920 for ~$70 new

Yeah, I searched a little last night on that very topic and doesn't look like there is a simple way of embedding an rtsp stream, without using vlc or similar activex control (not usable in all browsers) or flash.

I encourage you knowledgable people to find a way. Wyzecam is worth it.

I may play with this but it will be roughly the same thing that @SrgntBallistic posted above, but instead of using ffmpeg to configure the server will use motioneye to do all the conversion work. Of course, it's probably using ffmpeg in the background, just a little user-friendlier to add a camera in it's web interface than to deal with all the command-line stuff manually.

+1

Hope this gets enough requests so someone can make a reliable plugin

So after further investigation, wouldn't the defang hack firmware be able to be used directly within OctoPrint using the mjpg stream provided? That would require only modifying the wyze cam, no plugins required.

So I tried testing this and it's a no go it seems. The MJPEG stream is still embedded in an RTSP wrapper, so you can't use it like I thought.

Hi all, my first post here  and I wanted the same thing: using Wyze cam in Octoprint.

and I wanted the same thing: using Wyze cam in Octoprint.

If you are still looking for this, I have written some instructions how to get this working, Dafang Hacks are required, but it works quite well, with streaming over http you will lose some quality, but if you have some spare cpu power available it can look quite fantastic. On a raspberry you will need to make consessions in terms of bit/frame rate.

This is done using vlc as ffserver was not an option for me.

Follow the instructions here.

Hi,

I was able to get the vlc option working for streaming (not screenshots) using the RTSP firmware from Wyze and I was able to get the ffserver and ffmpeg option working for both streaming and snapshots when working in a terminal window. The problem is trying to get ffserver and ffmpeg working outside of running them from terminal.

I set up a crontab for pi and get ffserver to run but not ffmpeg using successive @reboot commands

@reboot ffserver &

@reboot ffmpeg -rtsp_transport tcp -i 'rtsp://username:password@cameraip/live' http://127.0.0.1:8090/camera.ffm >/dev/null 2>&1

I then tried making a script that gets called by the crontab to just launch ffmpeg and then tried to have it launch both ffserver and ffmpeg. I have read a lot of posts about ffmpeg being launched from crontab and a script (.sh) and tried everything mentioned but no luck. For those in here that have gotten this to work, what is the trick to get it to launch at boot of your raspberry pi?

Thanks!

Hello everyone!

I am wondering if there has been any progress on this or if you can help me. I have tried both instructions from @SrgntBallistic and @atom6 and get stuck on both. When I try to 'ffserver &' I get

"[1] 12580

pi@octopi : ~ $ -bash: ffserver: command not found";

when I try the VLC approach, I run the the './http_stream.sh' and get the same problem. Blank line and nothing happens. I have updated the firmware on the Wyze cam and get a stream in VLC, I just can't get it set up to get running in Octopi. I'm fairly novice at programming things like this (meaning I follow instructions fairly well but couldn't make anything up on my own). Thanks in advance for any input!

I've been closely reading this topic, as I just got OctoPrint installed today on an RPi4 and I have a Wyze V2 ready to go for this! Has there been any updates to getting this optimized?

I have been playing around tonight to reduce the load of my Octoprint streaming from my Wyze on my Pi 3+ and have reduce the load by a lot.

Using the Official rtsp firmware from Wyze.

cvlc -R rtsp://<rtsp user>:<rtsp password>@<rtsp ip addr>/live --sout-x264-preset fast --sout='#transcode{acodec=none,vcodec=MJPG,vb=1000,fps=0.5}:standard{mux=mpjpeg,access=http{mime=multipart/x-mixed-replace; boundary=--7b3cc56e5f51db803f790dad720ed50a},dst=:8899/videostream.cgi}' --sout-keep

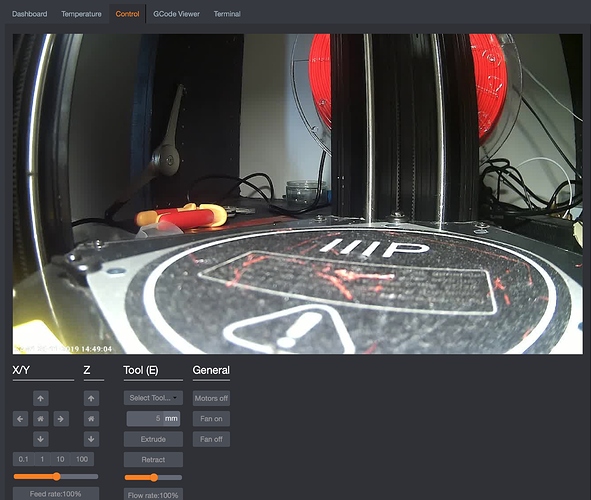

now in octoprint setup in the webcam tab, under the URL Stream I put in: http://<octoprint ip address>:8899/videostream.cgi

I made a Docker container for it. https://hub.docker.com/r/eroji/rtsp2mjpg

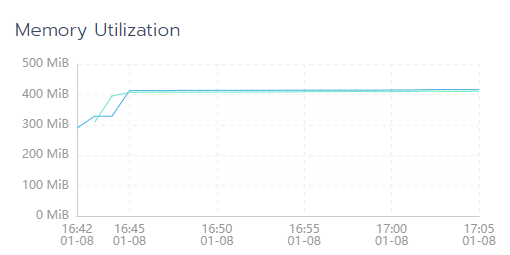

UPDATE: I updated the base image to alpine. Now the image is 21MB compressed and uses 100MB less memory when running. I noticed the original ubuntu image with its flavor of ffmpeg was leaking memory over time. The stream would still die after about a day of run time. Hopefully alpine one works better. Looks very steady and flat so far.

I updated the GitHub with docker-compose. You can pull it down yourself and run it as a service in docker.

Just run docker-compose up -d after cloning the repo. This will include an nginx proxy that listens on port 80. So you can get to the live stream at http://<ip>/live.mjpg and still snapshot at http://<ip>/still.jpg.

I played with the ffmpeg flags quite a bit and got it relatively stable. However, ever so often the ffmpeg process would still die with an error like this.

[rtsp @ 0x7ffbe78c3460] CSeq 11 expected, 0 received.

My ffmpeg command is currently:

/usr/bin/ffmpeg -hide_banner -loglevel info -rtsp_transport tcp -nostats -use_wallclock_as_timestamps 1 -i rtsp://user:pass@mycamera/live -async 1 -vsync 1 http://127.0.0.1:8090/feed.ffm

If anyone here is a ffmpeg expert, feel free to comment on how I can improve it to make it completely stable!

I've never used docker. This is meant to run on a Raspberry Pi? Could you point me in the direction of some stuff to get up to speed? Thanks

I might give this a try. Are you kicking it off in a bashrc file or manually running it?

If you use docker-compose, it will create a persistent instance of the docker containers. Now, I didn't test it on a Pi, so the base image may need to be changed to support ARM platform. Let me know if that's the case and I will update it to include an ARM alternative.

In theory you could use the webcamstreamer plugin to do this by modifying the advanced setup section and matching your ffmpeg command I suppose.

Either case, if you do that or try to run your docker image (once made ARM compatible) on an octopi instance you'd have to disable the webcamd service in order to release the lock on the video device. And if you're docker image is using port 80, not sure how that would play out since haproxy tunnels port 80 by default to octoprint's port 5000.

Looks like docker-compose.yaml file is missing in the repo...